Picture by Writer

Working giant language fashions (LLMs) domestically might be tremendous useful—whether or not you’d prefer to mess around with LLMs or construct extra highly effective apps utilizing them. However configuring your working surroundings and getting LLMs to run in your machine isn’t trivial.

So how do you run LLMs domestically with none of the trouble? Enter Ollama, a platform that makes native growth with open-source giant language fashions a breeze. With Ollama, every part you must run an LLM—mannequin weights and the entire config—is packaged right into a single Modelfile. Assume Docker for LLMs.

On this tutorial, we’ll check out the way to get began with Ollama to run giant language fashions domestically. So let’s get proper into the steps!

Step 1: Obtain Ollama to Get Began

As a primary step, you need to obtain Ollama to your machine. Ollama is supported on all main platforms: MacOS, Home windows, and Linux.

To obtain Ollama, you may both go to the official GitHub repo and comply with the obtain hyperlinks from there. Or go to the official website and obtain the installer if you’re on a Mac or a Home windows machine.

I’m on Linux: Ubuntu distro. So if you happen to’re a Linux person like me, you may run the next command to run the installer script:

$ curl -fsSL https://ollama.com/set up.sh | sh

The set up course of sometimes takes a couple of minutes. Throughout the set up course of, any NVIDIA/AMD GPUs shall be auto-detected. Be sure you have the drivers put in. The CPU-only mode works high quality, too. However it could be a lot slower.

Step 2: Get the Mannequin

Subsequent, you may go to the model library to verify the checklist of all mannequin households at the moment supported. The default mannequin downloaded is the one with the newest tag. On the web page for every mannequin, you may get extra information reminiscent of the scale and quantization used.

You may search by the checklist of tags to find the mannequin that you just need to run. For every mannequin household, there are sometimes foundational fashions of various sizes and instruction-tuned variants. I’m considering working the Gemma 2B mannequin from the Gemma family of lightweight models from Google DeepMind.

You may run the mannequin utilizing the ollama run command to tug and begin interacting with the mannequin straight. Nevertheless, you too can pull the mannequin onto your machine first after which run it. That is similar to how you’re employed with Docker pictures.

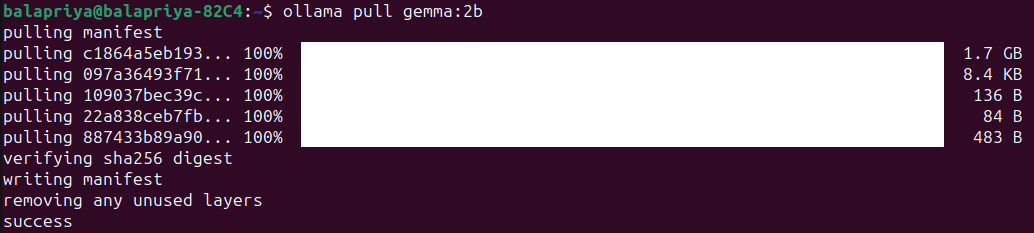

For Gemma 2B, working the next pull command downloads the mannequin onto your machine:

The mannequin is of measurement 1.7B and the pull ought to take a minute or two:

Step 3: Run the Mannequin

Run the mannequin utilizing the ollama run command as proven:

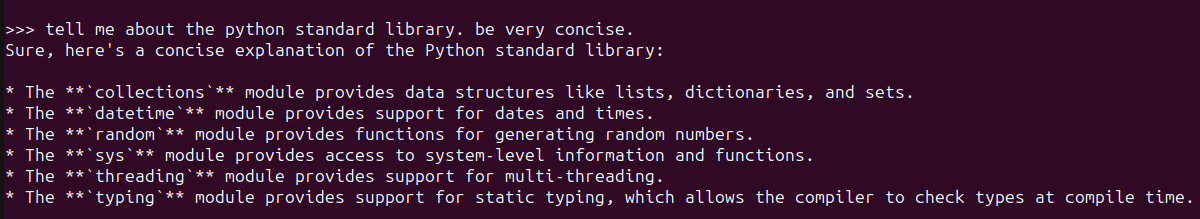

Doing so will begin an Ollama REPL at which you’ll be able to work together with the Gemma 2B mannequin. Right here’s an instance:

For a easy query in regards to the Python commonplace library, the response appears fairly okay. And contains most steadily used modules.

Step 4: Customise Mannequin Habits with System Prompts

You may customise LLMs by setting system prompts for a selected desired habits like so:

- Set system immediate for desired habits.

- Save the mannequin by giving it a reputation.

- Exit the REPL and run the mannequin you simply created.

Say you need the mannequin to at all times clarify ideas or reply questions in plain English with minimal technical jargon as attainable. Right here’s how one can go about doing it:

>>> /set system For all questions requested reply in plain English avoiding technical jargon as a lot as attainable

Set system message.

>>> /save ipe

Created new mannequin 'ipe'

>>> /bye

Now run the mannequin you simply created:

Right here’s an instance:

Step 5: Use Ollama with Python

Working the Ollama command-line shopper and interacting with LLMs domestically on the Ollama REPL is an effective begin. However typically you’ll need to use LLMs in your functions. You may run Ollama as a server in your machine and run cURL requests.

However there are easier methods. In the event you like utilizing Python, you’d need to construct LLM apps and listed here are a pair methods you are able to do it:

- Utilizing the official Ollama Python library

- Utilizing Ollama with LangChain

Pull the fashions you must use earlier than you run the snippets within the following sections.

Utilizing the Ollama Python Library

You should utilize the Ollama Python library you may set up it utilizing pip like so:

There may be an official JavaScript library too, which you need to use if you happen to favor creating with JS.

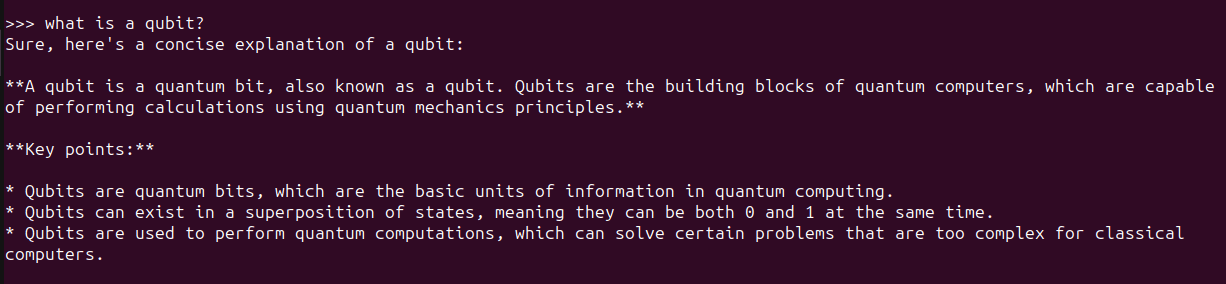

As soon as you put in the Ollama Python library, you may import it in your Python utility and work with giant language fashions. Here is the snippet for a easy language technology activity:

import ollama

response = ollama.generate(mannequin="gemma:2b",

immediate="what's a qubit?")

print(response['response'])

Utilizing LangChain

One other means to make use of Ollama with Python is utilizing LangChain. If in case you have current tasks utilizing LangChain it is simple to combine or change to Ollama.

Be sure you have LangChain put in. If not, set up it utilizing pip:

Here is an instance:

from langchain_community.llms import Ollama

llm = Ollama(mannequin="llama2")

llm.invoke("inform me about partial capabilities in python")

Utilizing LLMs like this in Python apps makes it simpler to change between completely different LLMs relying on the appliance.

Wrapping Up

With Ollama you may run giant language fashions domestically and construct LLM-powered apps with only a few traces of Python code. Right here we explored the way to work together with LLMs on the Ollama REPL in addition to from inside Python functions.

Subsequent we’ll strive constructing an app utilizing Ollama and Python. Till then, if you happen to’re seeking to dive deep into LLMs take a look at 7 Steps to Mastering Large Language Models (LLMs).

Bala Priya C is a developer and technical author from India. She likes working on the intersection of math, programming, knowledge science, and content material creation. Her areas of curiosity and experience embody DevOps, knowledge science, and pure language processing. She enjoys studying, writing, coding, and low! At the moment, she’s engaged on studying and sharing her information with the developer group by authoring tutorials, how-to guides, opinion items, and extra. Bala additionally creates partaking useful resource overviews and coding tutorials.