There are a number of change information seize strategies out there when utilizing a MySQL or Postgres database. A few of these strategies overlap and are very comparable no matter which database know-how you might be utilizing, others are completely different. Finally, we require a technique to specify and detect what has modified and a way of sending these adjustments to a goal system.

This put up assumes you might be conversant in change information seize, if not learn the earlier introductory put up right here “Change Data Capture: What It Is and How To Use It.” On this put up, we’re going to dive deeper into the alternative ways you’ll be able to implement CDC when you have both a MySQL and Postgres database and evaluate the approaches.

CDC with Replace Timestamps and Kafka

One of many easiest methods to implement a CDC resolution in each MySQL and Postgres is through the use of replace timestamps. Any time a document is inserted or modified, the replace timestamp is up to date to the present date and time and allows you to know when that document was final modified.

We will then both construct bespoke options to ballot the database for any new data and write them to a goal system or a CSV file to be processed later. Or we are able to use a pre-built resolution like Kafka and Kafka Connect that has pre-defined connectors that ballot tables and publish rows to a queue when the replace timestamp is bigger than the final processed document. Kafka Join additionally has connectors to focus on methods that may then write these data for you.

Fetching the Updates and Publishing them to the Goal Database utilizing Kafka

Kafka is an occasion streaming platform that follows a pub-sub mannequin. Publishers ship information to a queue and a number of shoppers can then learn messages from that queue. If we needed to seize adjustments from a MySQL or Postgres database and ship them to a knowledge warehouse or analytics platform, we first must arrange a writer to ship the adjustments after which a shopper that would learn the adjustments and apply them to our goal system.

To simplify this course of we are able to use Kafka Join. Kafka Join works as a center man with pre-built connectors to each publish and eat information that may merely be configured with a config file.

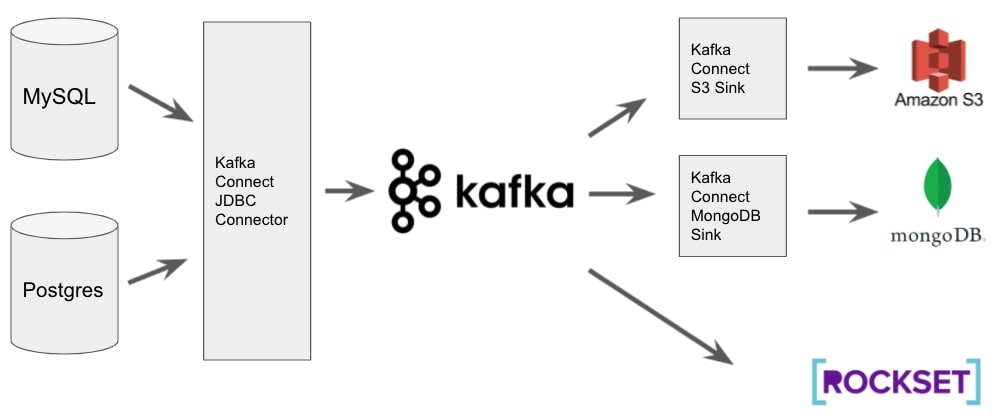

Fig 1. CDC structure with MySQL, Postgres and Kafka

As proven in Fig 1, we are able to configure a JDBC connector for Kafka Join that specifies which desk we want to eat, easy methods to detect adjustments which in our case might be through the use of the replace timestamp and which subject (queue) to publish them to. Utilizing Kafka Hook up with deal with this implies all the logic required to detect which rows have modified is completed for us. We solely want to make sure that the replace timestamp area is up to date (coated within the subsequent part) and Kafka Join will deal with:

- Holding monitor of the utmost replace timestamp of the most recent document it has revealed

- Polling the database for any data with newer replace timestamp fields

- Writing the info to a queue to be consumed downstream

We will then both configure “sinks” which outline the place to output the info or have the supply system discuss to Kafka immediately. Once more, Kafka Join has many pre-defined sink connectors that we are able to simply configure to output the info to many various goal methods. Companies like Rockset can discuss to Kafka immediately and due to this fact don’t require a sink to be configured.

Once more, utilizing Kafka Join implies that out of the field, not solely can we write information to many various areas with little or no coding required, however we additionally get Kafkas throughput and fault tolerance that may assist us scale our resolution sooner or later.

For this to work, we have to be certain that we have now replace timestamp fields on the tables we wish to seize and that these fields are all the time up to date each time the document is up to date. Within the subsequent part, we cowl easy methods to implement this in each MySQL and Postgres.

Utilizing Triggers for Replace Timestamps (MySQL & Postgres)

MySQL and Postgres each help triggers. Triggers assist you to carry out actions within the database both instantly earlier than or after one other motion occurs. For this instance, each time an replace command is detected to a row in our supply desk, we wish to set off one other replace on the affected row which units the replace timestamp to the present date and time.

We solely need the set off to run on an replace command as in each MySQL and Postgres you’ll be able to set the replace timestamp column to routinely use the present date and time when a brand new document is inserted. The desk definition in MySQL would look as follows (the Postgres syntax could be very comparable). Word the DEFAULT CURRENTTIMESTAMP key phrases when declaring the replacetimestamp column that ensures when a document is inserted, by default the present date and time are used.

CREATE TABLE person

(

id INT(6) UNSIGNED AUTO_INCREMENT PRIMARY KEY,

firstname VARCHAR(30) NOT NULL,

lastname VARCHAR(30) NOT NULL,

e-mail VARCHAR(50),

update_timestamp TIMESTAMP DEFAULT CURRENT_TIMESTAMP

);

This can imply our update_timestamp column will get set to the present date and time for any new data, now we have to outline a set off that may replace this area each time a document is up to date within the person desk. The MySQL implementation is easy and appears as follows.

DELIMITER $$

CREATE TRIGGER user_update_timestamp

BEFORE UPDATE ON person

FOR EACH ROW BEGIN

SET NEW.update_timestamp = CURRENT_TIMESTAMP;

END$$

DELIMITER ;

For Postgres, you first need to outline a perform that may set the update_timestamp area to the present timestamp after which the set off will execute the perform. It is a delicate distinction however is barely extra overhead as you now have a perform and a set off to keep up within the postgres database.

Utilizing Auto-Replace Syntax in MySQL

If you’re utilizing MySQL there’s one other, a lot less complicated means of implementing an replace timestamp. When defining the desk in MySQL you’ll be able to outline what worth to set a column to when the document is up to date, which in our case could be to replace it to the present timestamp.

CREATE TABLE person

(

id INT(6) UNSIGNED AUTO_INCREMENT PRIMARY KEY,

firstname VARCHAR(30) NOT NULL,

lastname VARCHAR(30) NOT NULL,

e-mail VARCHAR(50),

update_timestamp TIMESTAMP DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP

);

The good thing about that is that we not have to keep up the set off code (or the perform code within the case of Postgres).

CDC with Debezium, Kafka and Amazon DMS

An alternative choice for implementing a CDC resolution is through the use of the native database logs that each MySQL and Postgres can produce when configured to take action. These database logs document each operation that’s executed in opposition to the database which might then be used to copy these adjustments in a goal system.

The benefit of utilizing database logs is that firstly, you don’t want to write down any code or add any further logic to your tables as you do with replace timestamps. Second, it additionally helps deletion of data, one thing that isn’t doable with replace timestamps.

In MySQL you do that by turning on the binlog and in Postgres, you configure the Write Ahead Log (WAL) for replication. As soon as the database is configured to write down these logs you’ll be able to select a CDC system to assist seize the adjustments. Two common choices are Debezium and Amazon Database Migration Service (DMS). Each of those methods utilise the binlog for MySQL and WAL for Postgres.

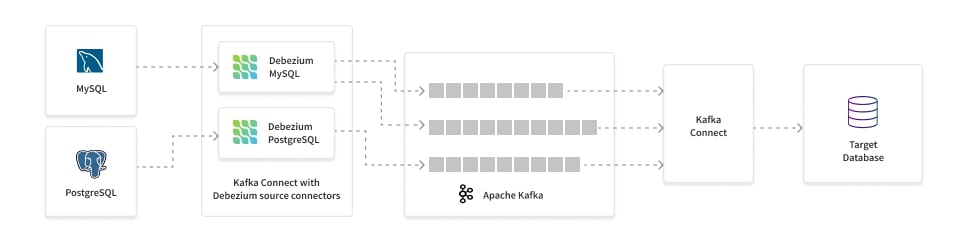

Debezium works natively with Kafka. It picks up the related adjustments, converts them right into a JSON object that accommodates a payload describing what has modified and the schema of the desk and places it on a Kafka subject. This payload accommodates all of the context required to use these adjustments to our goal system, we simply want to write down a shopper or use a Kafka Join sink to write down the info. As Debezium makes use of Kafka, we get all the advantages of Kafka corresponding to fault tolerance and scalability.

Fig 2. Debezium CDC structure for MySQL and Postgres

AWS DMS works in the same technique to Debezium. It helps many various supply and goal methods and integrates natively with all the common AWS information providers together with Kinesis and Redshift.

The primary good thing about utilizing DMS over Debezium is that it is successfully a “serverless” providing. With Debezium, if you would like the flexibleness and fault tolerance of Kafka, you’ve got the overhead of deploying a Kafka cluster. DMS as its title states is a service. You configure the supply and goal endpoints and AWS takes care of dealing with the infrastructure to take care of monitoring the database logs and copying the info to the goal.

Nonetheless, this serverless strategy does have its drawbacks, primarily in its characteristic set.

Which Possibility for CDC?

When weighing up which sample to observe it’s necessary to evaluate your particular use case. Utilizing replace timestamps works while you solely wish to seize inserts and updates, if you have already got a Kafka cluster you’ll be able to rise up and working with this in a short time, particularly if most tables already embrace some form of replace timestamp.

When you’d quite go together with the database log strategy, possibly since you need precise replication then you need to look to make use of a service like Debezium or AWS DMS. I might recommend first checking which system helps the supply and goal methods you require. In case you have some extra superior use circumstances corresponding to masking delicate information or re-routing information to completely different queues based mostly on its content material then Debezium might be your best option. When you’re simply searching for easy replication with little overhead then DMS will give you the results you want if it helps your supply and goal system.

In case you have real-time analytics wants, you might think about using a goal database like Rockset as an analytics serving layer. Rockset integrates with MySQL and Postgres, utilizing AWS DMS, to ingest CDC streams and index the info for sub-second analytics at scale. Rockset can even learn CDC streams from NoSQL databases, corresponding to MongoDB and Amazon DynamoDB.

The suitable reply is dependent upon your particular use case and there are various extra choices than have been mentioned right here, these are simply a few of the extra common methods to implement a contemporary CDC system.

Lewis Gavin has been an information engineer for 5 years and has additionally been running a blog about abilities inside the Knowledge neighborhood for 4 years on a private weblog and Medium. Throughout his pc science diploma, he labored for the Airbus Helicopter workforce in Munich enhancing simulator software program for navy helicopters. He then went on to work for Capgemini the place he helped the UK authorities transfer into the world of Large Knowledge. He’s at present utilizing this expertise to assist rework the info panorama at easyfundraising.org.uk, a web-based charity cashback website, the place he’s serving to to form their data warehousing and reporting functionality from the bottom up.