We’re introducing a brand new Rockset Integration for Apache Kafka that gives native assist for Confluent Cloud and Apache Kafka, making it less complicated and sooner to ingest streaming information for real-time analytics. This new integration comes on the heels of a number of new product options that make Rockset extra reasonably priced and accessible for real-time analytics together with SQL-based rollups and transformations.

With the Kafka Integration, customers now not must construct, deploy or function any infrastructure part on the Kafka facet. Right here’s how Rockset is making it simpler to ingest occasion information from Kafka with this new integration:

- It’s managed totally by Rockset and will be setup with only a few clicks, maintaining with our philosophy on making real-time analytics accessible.

- The mixing is steady so any new information within the Kafka subject will get listed in Rockset, delivering an end-to-end information latency of two seconds.

- The mixing is pull-based, guaranteeing that information will be reliably ingested even within the face of bursty writes and require no tuning on the Kafka facet.

- There isn’t a must pre-create a schema to run real-time analytics on occasion streams from Kafka. Rockset indexes the whole information stream so when new fields are added, they’re instantly uncovered and made queryable utilizing SQL.

- We’ve additionally enabled the ingest of historic and real-time streams in order that clients can entry a 360 view of their information, a typical real-time analytics use case.

On this weblog, we introduce how the Kafka Integration with native assist for Confluent Cloud and Apache Kafka works and stroll by means of run real-time analytics on occasion streams from Kafka.

A Fast Dip Below the Hood

The brand new Kafka Integration adopts the Kafka Consumer API , which is a low-level, vanilla Java library that may very well be simply embedded into functions to tail information from a Kafka subject in actual time.

There are two Kafka shopper modes:

- subscription mode, the place a gaggle of shoppers collaborate in tailing a typical set of Kafka matters in a dynamic method, counting on Kafka brokers to supply rebalancing, checkpointing, failure restoration, and many others

- assign mode, the place every particular person shopper specifies assigned subject partitions and manages the progress checkpointing manually

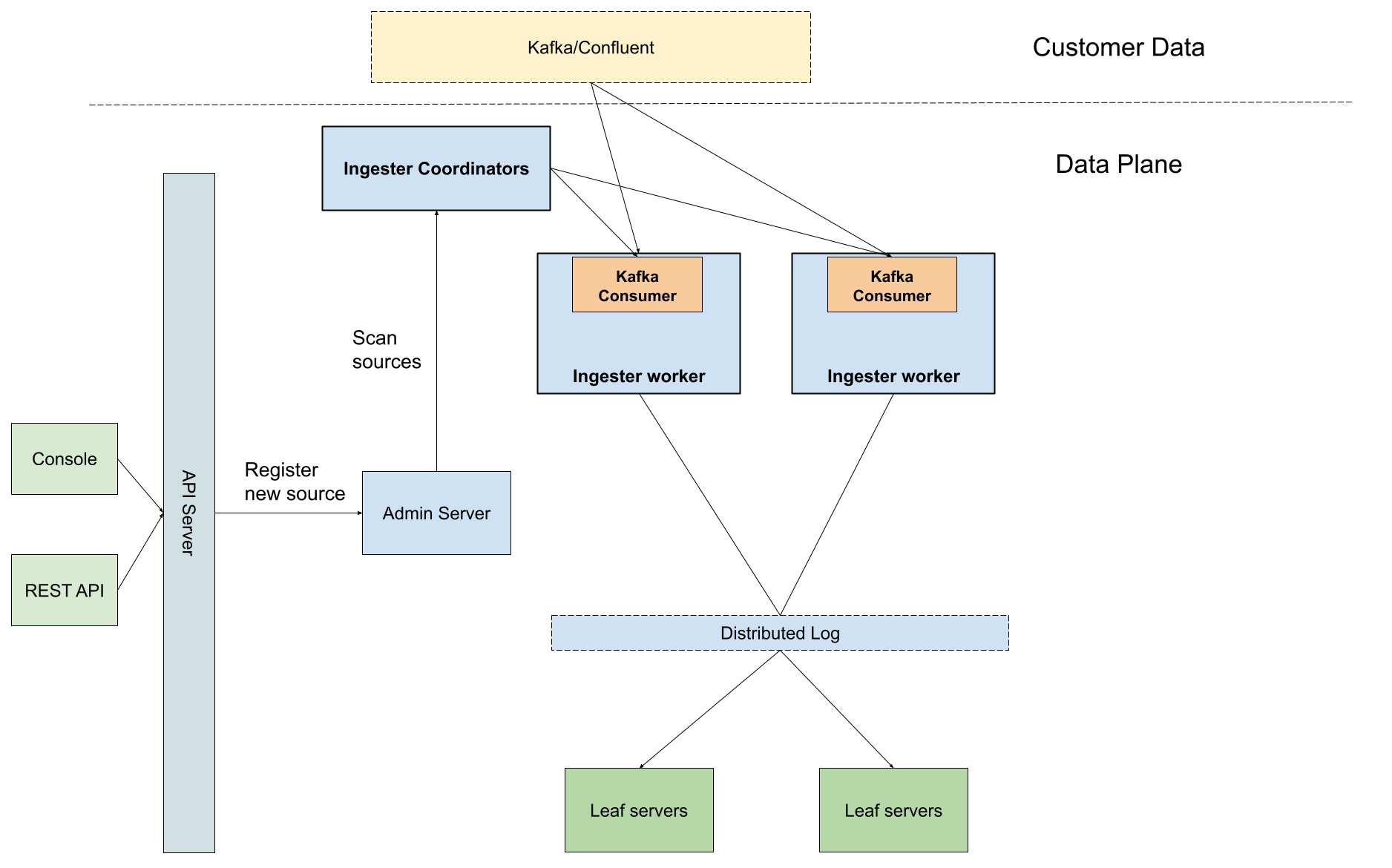

Rockset adopts the assign mode as we’ve already constructed a general-purpose tailer framework based mostly on the Aggregator Leaf Tailer Architecture (ALT) to deal with the heavy-lifting, reminiscent of progress checkpointing and customary failure circumstances. The consumption offsets are fully managed by Rockset, with out saving any info inside consumer’s cluster. Every ingestion employee receives its personal subject partition task and final processed offsets throughout the initialization from the ingestion coordinator, after which leverages the embedded shopper to fetch Kafka subject information.

The above diagram reveals how the Kafka shopper is embedded into the Rockset tailer framework. A buyer creates a brand new Kafka assortment by means of the API server endpoint and Rockset shops the gathering metadata contained in the admin server. Rockset’s ingester coordinator is notified of latest sources. When any new Kafka supply is noticed, the coordinator spawns an inexpensive variety of employee duties geared up with Kafka shoppers to start out fetching information from the client’s Kafka subject.

Kafka and Rockset for Actual-Time Analytics

As quickly as occasion information lands in Kafka, Rockset routinely indexes it for sub-second SQL queries. You may search, aggregate and join data across Kafka topics and different information sources together with information in S3, MongoDB, DynamoDB, Postgres, and extra. Subsequent, merely flip the SQL question into an API to serve information in your utility.

A pattern structure for real-time analytics on streaming information from Apache Kafka

Let’s stroll by means of a step-by-step instance of analyzing real-time order information utilizing a mock dataset from Confluent Cloud’s datagen. On this instance, we’ll assume that you have already got a Kafka cluster and subject setup.

An Simple 5 Minutes to Get Setup

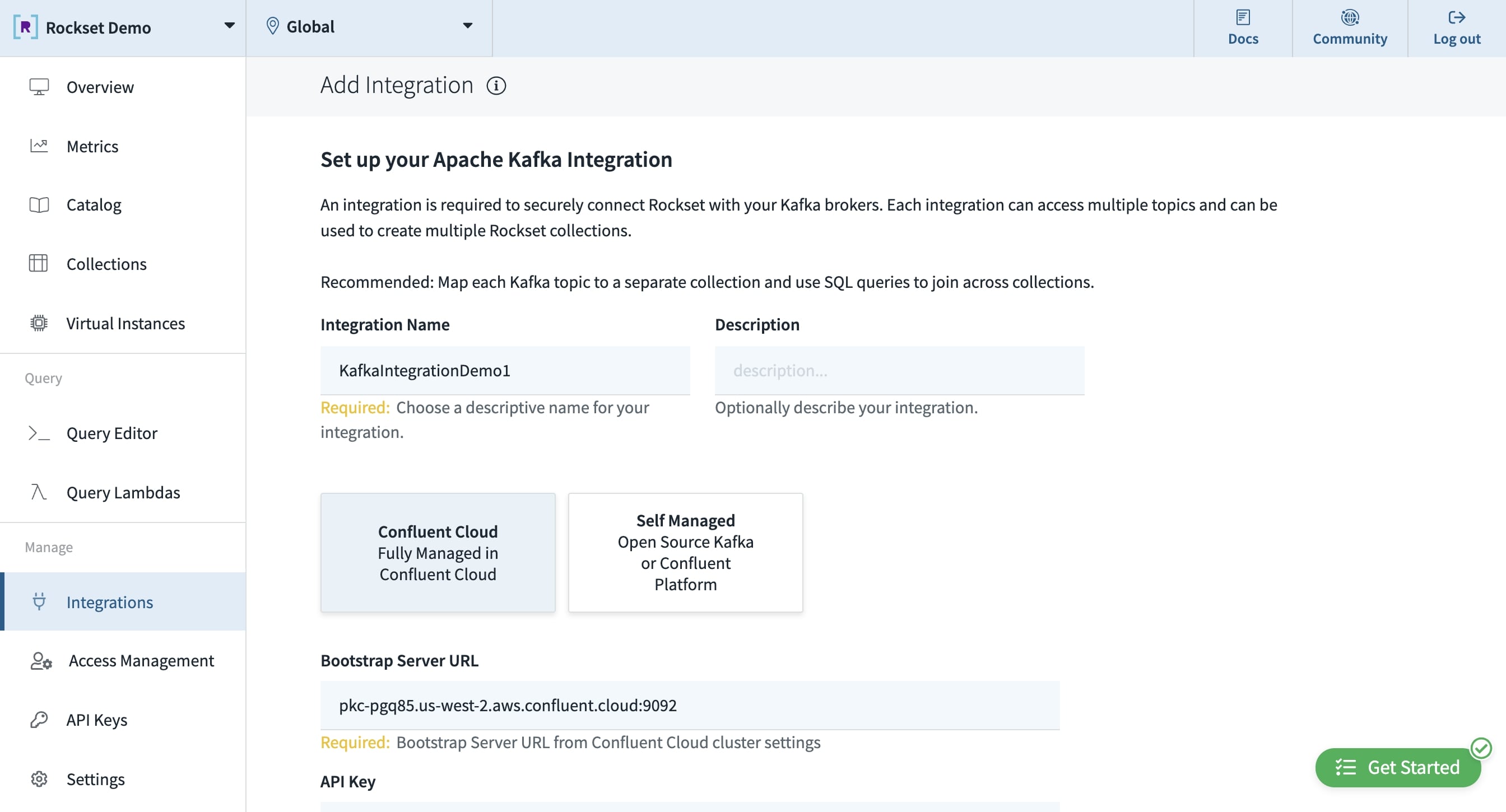

Setup the Kafka Integration

To setup Rockset’s Kafka Integration, first choose the Kafka supply from between Apache Kafka and Confluent Cloud. Enter the configuration info together with the Kafka supplied endpoint to attach and the API key/secret, in case you’re utilizing the Confluent platform. For the primary model of this launch, we’re solely supporting JSON information (keep tuned for Avro!).

The Rockset console the place the Apache Kafka Integration is setup.

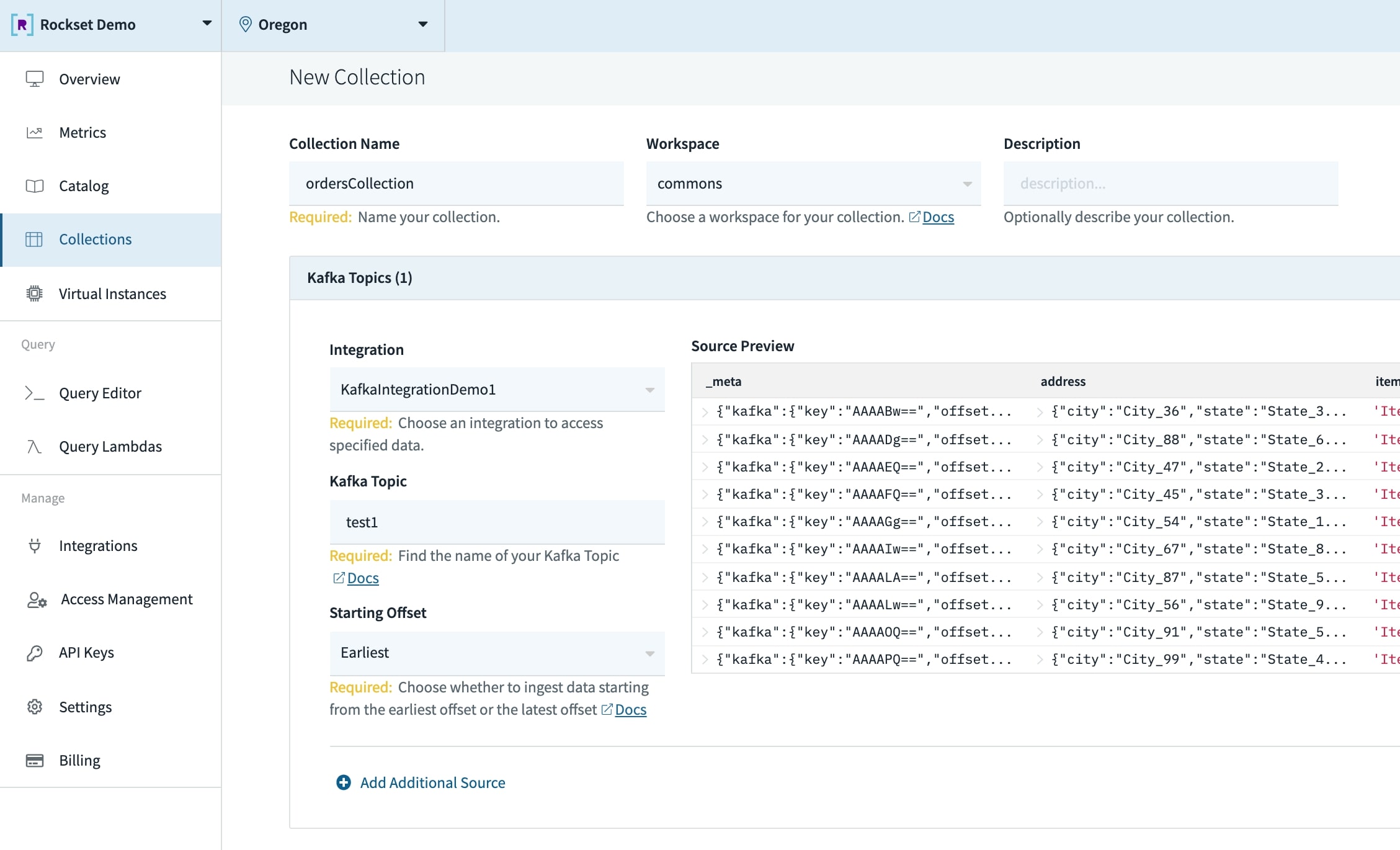

Create a Assortment

A group in Rockset is just like a desk within the SQL world. To create a group, merely add in particulars together with the identify, description, integration and Kafka subject. The beginning offset allows you to backfill historic information in addition to seize the most recent streams.

A Rockset assortment that’s pulling information from Apache Kafka.

Remodel and Rollup Information

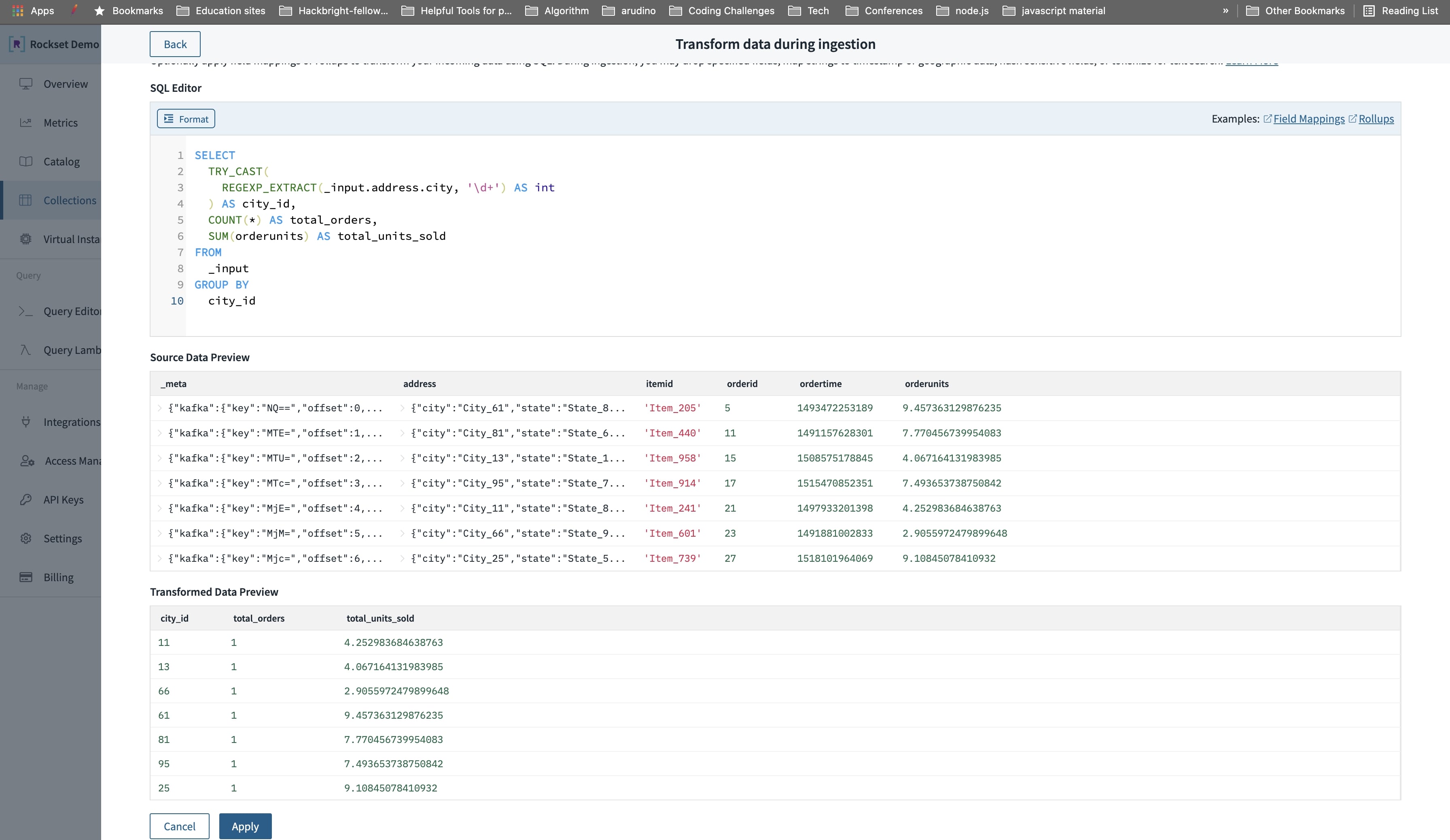

You could have the choice at ingest time to additionally transform and rollup event data utilizing SQL to cut back the storage measurement in Rockset. Rockset rollups are capable of assist complicated SQL expressions and rollup information accurately and precisely even for out of order information.

On this instance, we’ll do a rollup to mixture the full models offered (SUM(orderunits)) and complete orders made (COUNT(*)) in a selected metropolis.

A SQL based mostly rollup at ingest time within the Rockset console.

Question Occasion Information Utilizing SQL

As quickly as the information is ingested, Rockset will index the information in a Converged Index for quick analytics at scale. This implies you’ll be able to question semi-structured, deeply nested data using SQL while not having to do any information preparation or efficiency tuning.

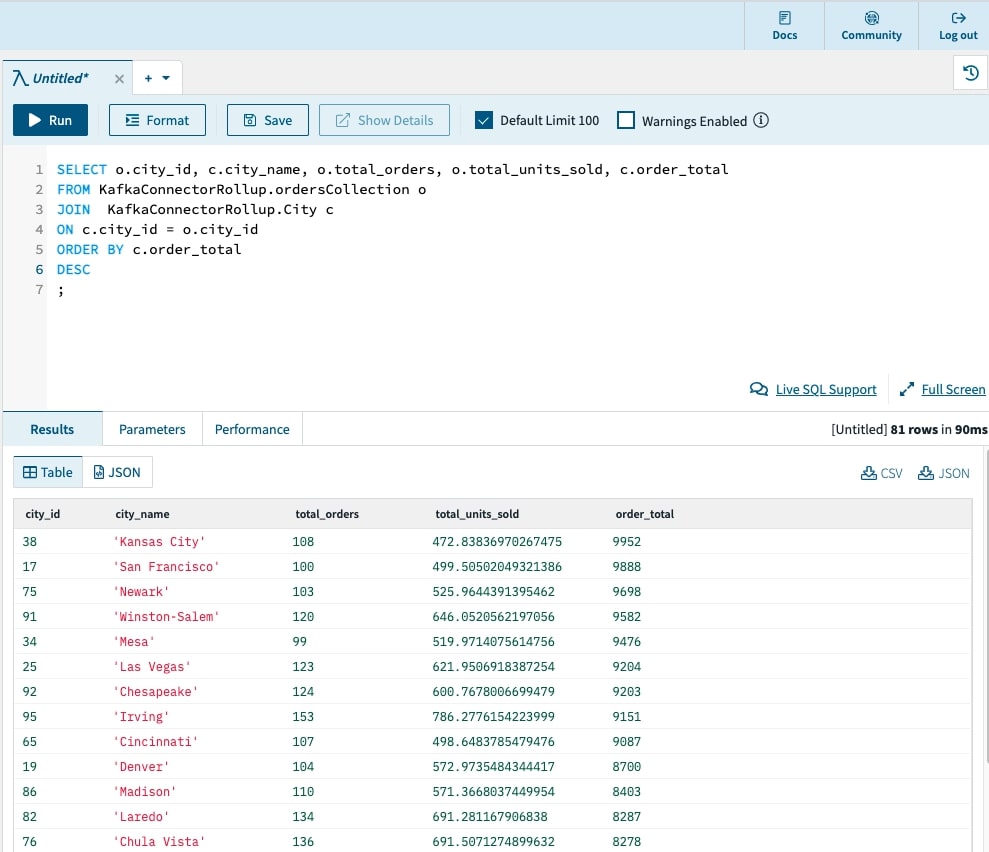

On this instance, we’ll write a SQL question to search out town with the best order quantity. We’ll additionally be a part of the Kafka information with a CSV in S3 of town IDs and their corresponding names.

🙌 The SQL question on streaming information returned in 91 Milliseconds!

We’ve been capable of go from uncooked occasion streams to a quick SQL question in 5 minutes 💥. We additionally recorded an end-to-end demonstration video so you’ll be able to higher visualize this course of.

Embedded content: https://youtu.be/jBGyyVs8UkY

Unlock Streaming Information for Actual-Time Analytics

We’re excited to proceed to make it simple for builders and information groups to investigate streaming information in actual time. For those who’ve needed to make the transfer from batch to real-time analytics, it’s simpler now than ever earlier than. And, you may make that transfer in the present day. Contact us to affix the beta for the brand new Kafka Integration.

About Boyang Chen – Boyang is a workers software program engineer at Rockset and an Apache Kafka Committer. Previous to Rockset, Boyang spent two years at Confluent on numerous technical initiatives, together with Kafka Streams, exactly-once semantics, Apache ZooKeeper elimination, and extra. He additionally co-authored the paper Consistency and Completeness: Rethinking Distributed Stream Processing in Apache Kafka . Boyang has additionally labored on the advertisements infrastructure workforce at Pinterest to rebuild the entire budgeting and pacing pipeline. Boyang has his bachelors and masters levels in pc science from the College of Illinois at Urbana-Champaign.